Overview

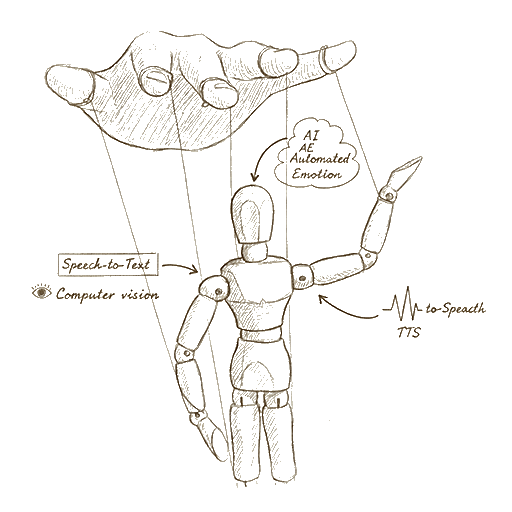

At Techdojo Ltd I worked primarily on VirtYou—a platform that gives AI a face and a body. VirtYou’s Automated Emotion (AE) software lets photorealistic 3D virtual actors deliver emotionally compelling, interactive, live performances using AI-generated dialogue as the script. Emotions are generated procedurally on the fly; avatars are spatial and embodied. AI (logic, language) meets AE (a physiological model of emotion) in software.

I was involved in setting up the foundation of the platform, the 3D asset and rendering pipeline, speech-and-viseme integration, and face-driven avatar control—as well as a major client product: Langara, a VR application for educator training.

VirtYou: Platform foundation

Speech, visemes, and dialogue

I worked on connecting AWS Polly into the pipeline: receiving audio, extracting visemes (mouth shapes for lip-sync), and mapping those movements onto the avatar so speech and facial animation stay in sync. The dialogue itself can come from any source—a third-party LLM API, Hugging Face, or a proprietary API from the company using VirtYou. The system is designed so that whoever integrates VirtYou can plug in their own AI/text provider; my work was to make the pipeline (Polly → visemes → avatar movement) robust and reusable.

Face-driven avatar control

I implemented face tracking and mapping using MediaPipe: the user’s face movement and location are captured in real time and mapped onto the avatar. So the avatar can mirror the user’s expressions—making live, user-driven performances possible. This involved research and experimentation to get reliable tracking and a clean mapping from camera space to the 3D character.

3D pipeline and web optimization

3D for the web is constrained: models need to be low-poly enough to run smoothly but still look good and realistic. I was responsible for setting up and optimizing 3D models for the web—import pipelines, LODs where useful, and strict poly and texture budgets. I also worked extensively in Blender: building and preparing models, rigging, and animations (both for procedural use and for preset/normal animations). Getting characters from Blender into the runtime without losing quality or performance was a core part of the pipeline.

Web rendering and shadows

Rendering complex 3D characters on the web doesn’t look good by default. I spent time on web rendering—making avatars and scenes hold up in the browser. That included experimenting with different shadow techniques (shadow maps, soft shadows, performance vs. quality) and other rendering tweaks so the result felt believable without killing frame rate.

What makes procedural animation believable: screen space motion

A big part of making procedural animations feel natural and believable is screen space motion—how the character’s movement reads on screen. I was involved in research and experimentation around this: how motion reads in 2D, how to blend procedural and keyframe animation, and how to keep characters feeling alive rather than robotic. That work fed back into both VirtYou’s core and into client apps like Langara.

Langara: VR application for educator training

Langara is a VR application built for Langara College (Vancouver, Canada). It puts educators in a virtual childcare setting where they interact with virtual children showing different behaviors. The educators practice giving feedback on those behaviors—so it’s training for childcare specialists: learning to handle difficult or nuanced situations in a safe, repeatable environment.

Technical scope

The app uses VirtYou’s Automated Emotion and procedural animations, plus normal (keyframe) animations where needed—for example, preset child behaviors that trigger in response to the educator’s actions. Screen space motion and the same principles we developed for VirtYou are what make the virtual children’s movement feel believable and natural in VR.

My role on Langara included:

- VR implementation — Making the experience work in WebXR: interaction, camera, performance, and comfort in headset.

- Game logic — Behavior triggers, scenario flow, and how the virtual children respond to the educator.

- 3D asset pipeline — Characters and environment optimized for VR, with Blender for creation and retargeting.

- Speech and emotion — Polly integration and viseme mapping (same pipeline as VirtYou), so characters speak and emote correctly in VR.

- Animation — Blending VirtYou’s procedural system with preset animations and paying attention to screen space motion so movement reads well in VR.

Langara was my first full VR project; it deepened my work in animation techniques (web + Blender) and in building interactive, scenario-based training that feels real.

Stack and integration

Three.js (and WebXR for Langara) for 3D and VR. AWS Polly for TTS and visemes. Hugging Face and other APIs for AI/dialogue. Node.js for backend and orchestration. Blender for modeling, rigging, and animation. MediaPipe for face tracking. The hard part is the bridge: text and audio → visemes and emotion → 3D character, with everything staying in sync and performant on web and in VR.

Why this matters for EdTech

VirtYou and Langara are both about embodied, responsive characters in training contexts. The same pipeline—low-poly but believable 3D, procedural emotion, speech-driven animation, and careful attention to how motion reads—applies to any product where you want learners to interact with characters that feel present and natural. If you’re building something in that space (web or VR), this is the kind of technical depth I bring.

Related

- VirtYou — Product site

- VirtYou project — Overview and stack

- Langara project — VR educator training app